Make Your AI Remember! A Guide to n8n's "Central Memory" Architecture

Solving "AI Amnesia" with Normalization and Hydration

Ran! Listen to this! I built an AI chatbot with n8n, but it doesn't remember our conversations at all!

*sigh* That's the classic "AI Amnesia" problem right there.

Amnesia? Like the AI has memory loss? Yesterday I told it "My name is Tanaka," and today it asked me "What's your name?" again!

Yes, exactly. The thing is, LLMs are fundamentally "stateless." Every time an API request finishes, their memory tends to reset.

*Stateless refers to a system design where the system doesn't retain information from previous interactions and starts fresh with each request.

So does that mean the memory features I added separately to my WhatsApp and Slack workflows were pointless...?

(I knew this would happen...) That's called the "Per-flow State" approach, and unfortunately it has limitations. What you discussed on WhatsApp won't be known by your Slack agent, and information often disappears when sessions expire.

Fix this now! An AI that keeps asking customers the same questions over and over is completely useless!

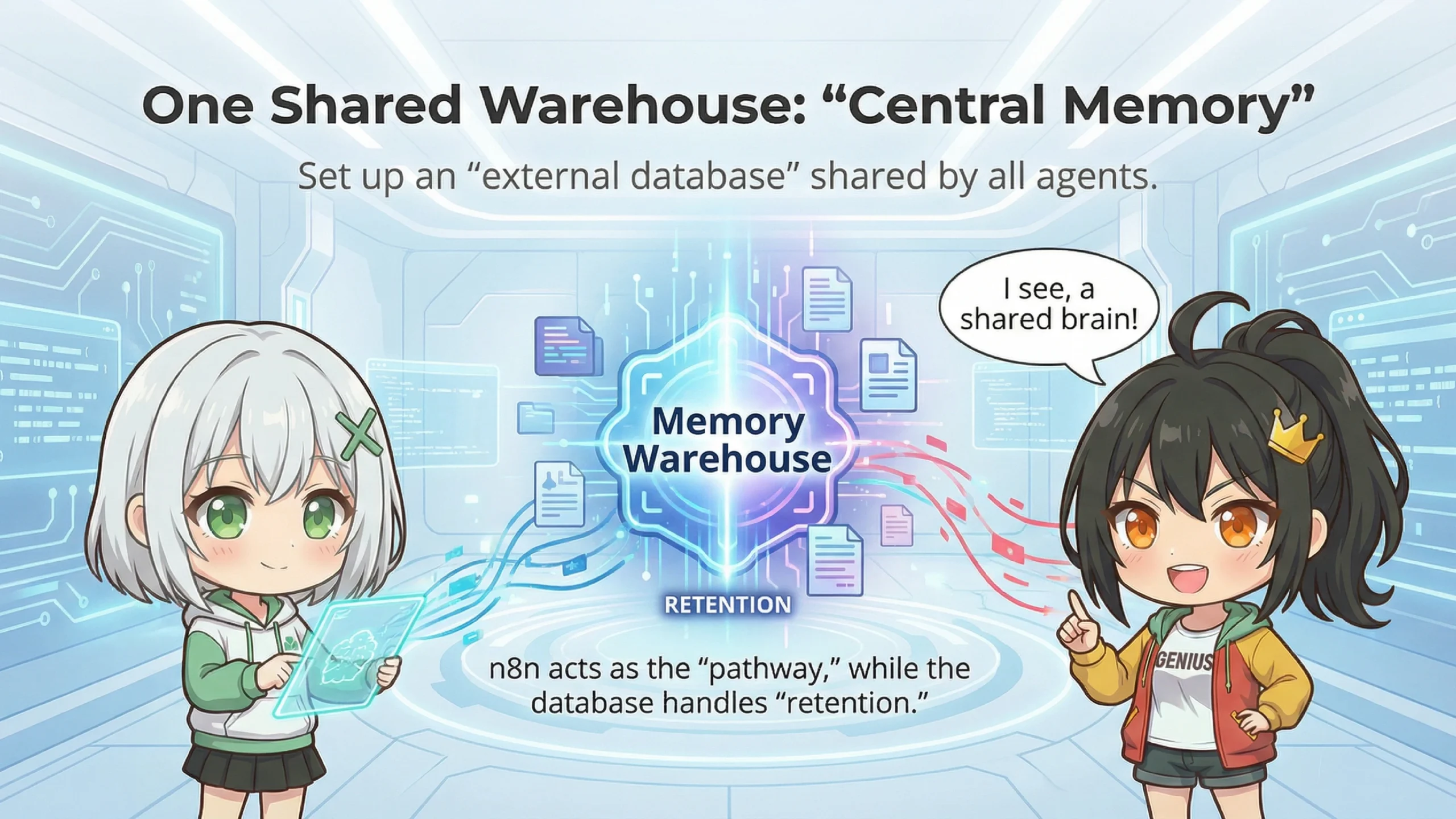

Don't worry, there's a solution. With the "Central Memory" architecture, your AI can properly remember conversations.

Central Memory? What's that, like putting all the AI's brains in one place?

Nice way to put it! Simply put, you set up an "external database" that all your AI agents and workflows share. n8n becomes the "router" for information, while the "storage" of memories is delegated to the external database.

I see... So whether someone talks through WhatsApp or Slack, they're all looking at the same "memory warehouse"?

Exactly! I'd recommend PostgreSQL for the database, and using a managed service like Supabase often makes the setup much easier.

📊 Traditional n8n Memory vs Central Memory

| Feature | Traditional Memory | Central Memory |

|---|---|---|

| Persistence | Often lost when session expires | Semi-permanent storage |

| Sharing Scope | Single workflow only | All workflows & channels |

| Searchability | Recent entries only | Full-text search & SQL capable |

I see, that's a big difference. But how do you actually implement it?

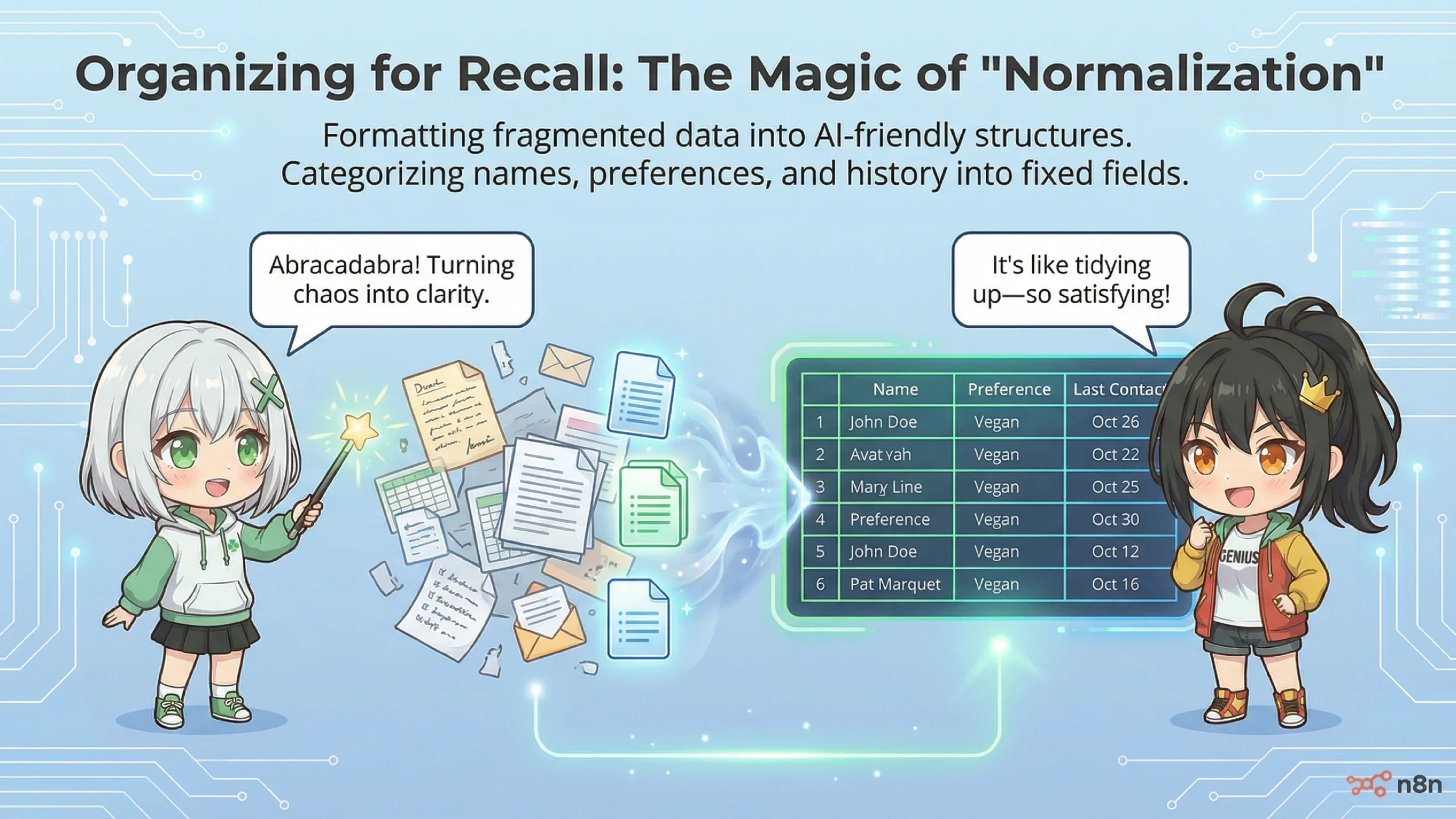

There are two key points: "Normalization" and "Hydration." By properly managing the entry and exit points of information, your AI's memory can improve dramatically.

Normalization? Sounds complicated...

Simply put, it means "converting data from various formats into a unified format." Whether it's a WhatsApp message, Slack message, or email, you convert everything to the same format before saving it to the database.

Oh, I get it! Like packing for a move—putting all your different stuff into the same-sized boxes!

Great analogy! That's exactly the idea. In n8n, I'd recommend converting to a "standard message object" early in the workflow.

📦 Example Standard Message Object

I see, there are various fields like session_id and user_id.

The golden rule is "Normalize before branching." Before you split into WhatsApp processing, Slack processing, etc., convert everything to the same format first—it makes downstream processing much easier.

But aren't WhatsApp webhooks super complicated? I heard there are tons of extra events like status updates coming in.

You're right. WhatsApp sends "Sent," "Read," and other status update events to the same webhook, so you often need to filter them first. The standard approach is to use n8n's Code node with JavaScript to check if there's an actual message in the payload.

Hydration? That's not about drinking water, is it?

Hehe, in tech terms it means "injecting data to activate something." By injecting the necessary context into a stateless AI right before inference, the AI can behave as if it's "always remembered you."

*Hydration is an IT term referring to the process of injecting external data into an empty template or stateless system to make it "ready to use."

Oh! So when a user sends a message, you pull up past conversations from the database and hand them to the AI saying "Here, read this first"?

Exactly right, Ete-senpai! This is sometimes called "Context Engineering."

Think of it like a mother who knows her child inside out. When the kid just says "I'm hungry," she intuits "This one loves curry, and after practice today they're tired, so I should make a large portion."

That's wonderful...! It would be amazing if AI could become such a thoughtful assistant.

For implementation in n8n, the basic flow is: first retrieve user info and recent chat history with a Postgres node, then combine them in a Code node and inject them into the system prompt.

🔧 Basic Steps for Hydration

- Retrieve User Info: Get name and metadata from the users table

- Retrieve Chat History: Get the last N messages from chat_messages table

- Build Context: Combine retrieved data into text format

- Inject into AI: Embed in system prompt and send to LLM

I see. Normalization organizes and saves the information, and hydration retrieves it when needed and passes it to the AI... it's a cycle!

By the way, what happens if the AI throws an error? Like an API timeout in the middle of a conversation?

Good question. I'd recommend creating "Shared Error Paths." Instead of writing individual error handling in each workflow, create one dedicated error sub-workflow and consolidate everything there.

Makes sense—managing it in one place would make maintenance easier too.

Also, for critical actions like payment approvals, I'd recommend implementing "Human-in-the-Loop"—a mechanism that inserts human approval before the AI auto-processes.

*Human-in-the-Loop (HITL) is a design approach that incorporates human verification and approval steps within AI or automation system workflows.

True, leaving everything to AI can be scary. Especially when money's involved—humans should definitely check that.

⚠️ Implementation Notes

- Passing entire conversation histories consumes many tokens, so it's common to limit to the last N messages

- Be careful not to store sensitive information (like passwords) in memory

- It's recommended to back up your database regularly

Yay! Now my AI should finally remember conversations properly!

Yes, the Central Memory architecture should significantly improve the AI Amnesia problem.

📝 Key Takeaways

- Cause of AI Amnesia: LLMs are stateless, so memory tends to reset each session

- Central Memory: Architecture that centrally manages memory in an external database

- Normalization: Converting inputs from different channels to a unified format for storage

- Hydration: Retrieving context from the database before inference and injecting it into the AI

- Operational Tips: Ensure stability and safety with Shared Error Paths and Human-in-the-Loop

As expected from you, Ran! Now I can level up my chatbot!

Oh, it's nothing... It might feel difficult at first, but if you implement it step by step, you'll be fine.

Alright, I'm going to try this right away! If you want to give your AI some memory power too, give Central Memory a shot! See you!

See you next time. If you have any questions, feel free to leave a comment!